🍰 Cake's Take on uncomfortable but necessary questions. This week: where does your sample actually come from?

If you've ever commissioned online research, like a nationally representative study or a survey of 300 grocery shoppers, you'll probably feel it’s a solid base for decision-making. But have you ever asked where those people came from? How were they recruited? What checks were done to make sure they're real people?

The research industry is talking about data quality a lot, but these conversations aren't always being had by those who are commissioning research and basing real decisions on the results. I understand why though, because it feels like the provider's responsibility.

But last year the Market Research Society (MRS) estimated that data quality issues cost the industry £209 million annually. The indirect cost of business decisions based on unreliable data is harder to size.

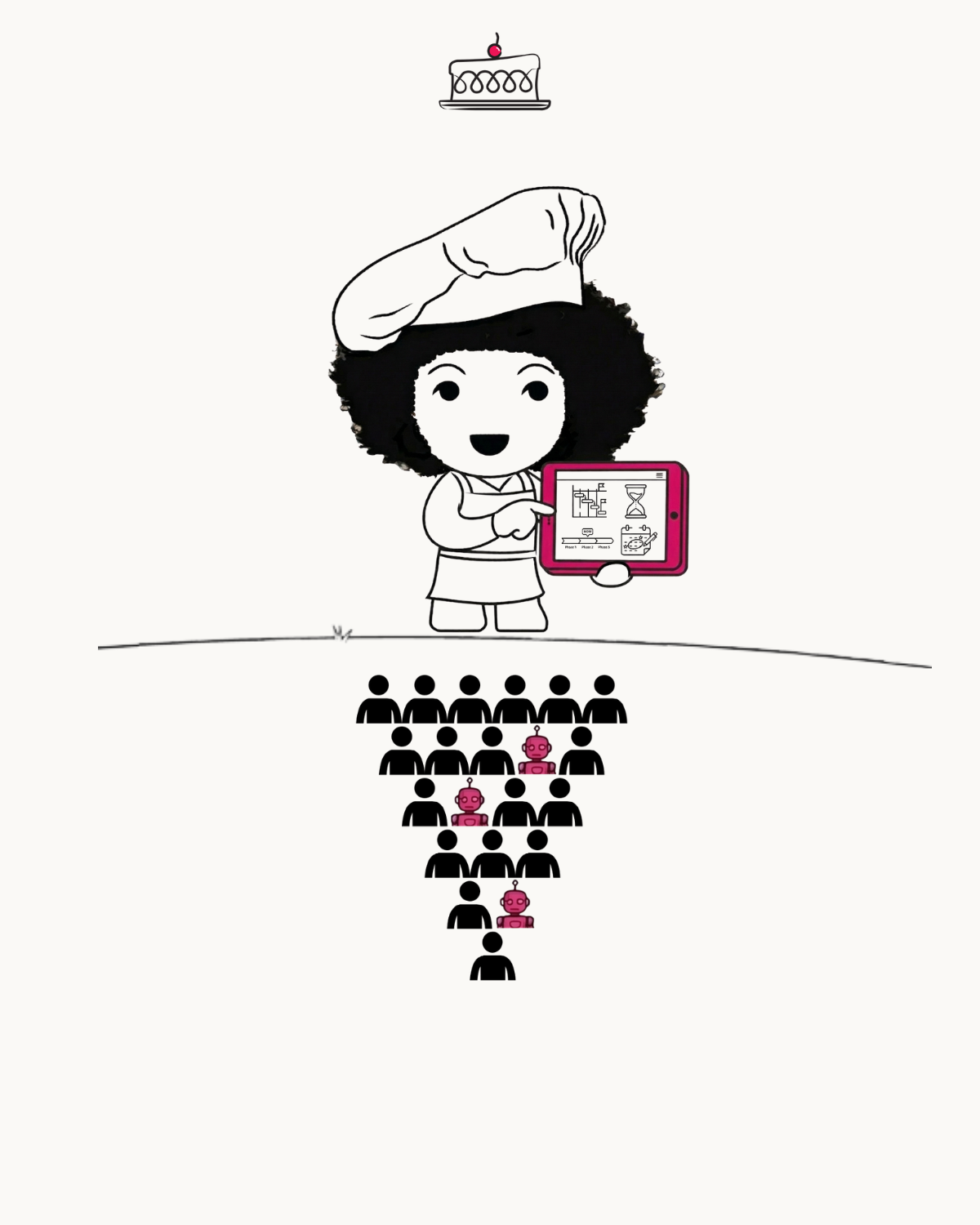

Part of it is a structural problem. Some online panels operate through router systems, where people who don't qualify for one survey get automatically redirected to another. When they reach your survey, they may have answered loads of screening questions yet be given a very low 'reward' for their time.

There's also fraud, with survey completion by ‘bots’ (automated fake respondents) becoming a growing problem. Some bots can imitate human response patterns and get past basic attention checks.

In reality, how much difference does this make?

In my experience, it can be huge and costly. A client once asked me for help with a dataset that included all sorts of contradictory answers. After a lot of digging I could separate the questionable stuff from the real story (but it took time!).

In a more rigorous test, it was found to be the difference between having to remove 6% vs. 32% of the data on a study. This was a study among 18-30 year olds by a UK panel provider who decided to put their approaches to the test. Something I always like to see!

Whatever your research budget, the decisions you're making off the back of data are only as good as the data itself.

Here are some questions I think are worth asking when you’re talking to insight partners about your next brief:

- Where is the sample coming from? Is it a managed panel, an aggregator (a platform that pulls respondents from multiple sources), or river sampling (where people are recruited from ads/pop-ups)?

- How are they rewarded?

- What quality checks happen? During and after fieldwork.

- What % of data typically gets removed during cleaning?

If you have a study coming up and you want to feel confident that the data will be worth acting on, I’d be happy to have a quick chat to talk through what to look for, please use the link in the comments.

#CakesTake #CakeConsulting #MarketResearch #DataQuality #QuestionEverything

Sources: MRS.org.uk, Operations Network, David Sutterby; Yonder Data Solutions, "Not all panels are created equal".